Hallucination-free Enterprise AI – Verifiable answers with sources and audit trails

Imagine you are a leader preparing for a strategic move. You are presented with figures generated by the system. The text is coherent and the style is convincing. There is just one problem. It is not true.

The model confidently asserts something that is actually a random blend of past data and a recent news item. For you, this represents a significant decision risk because accuracy is the foundation of supported decision-making. In creative writing, this might be an advantage, but in business, it is a failure.

The question is how you can achieve a hallucination-free enterprise AI when the technology is fundamentally built on probabilities.

When confidence is not evidence

Public Large Language Models act like well-read but occasionally confused librarians. They have seen a vast amount of content, yet they cannot recall exactly where an insight originated, so they fill in the blanks based on statistical likelihood. When building an enterprise knowledge base, this uncertainty is an unacceptable risk.

What is hallucination in artificial intelligence?

A hallucination occurs when a model presents false information as fact because it relies on patterns instead of citing a specific source.

In a professional setting, a "probably good" answer is not enough. You need verifiable, source-bound operations where the machine retrieves rather than improvises.

How grounding and RAG technology work?

We see the solution in a well-established architecture. This is RAG technology, meaning Retrieval-Augmented Generation. Think of it as an open-book exam. The system does not rely on uncertain background knowledge but works directly from your verified internal documents.

How does grounding work?

- Search – gathering relevant details from your documents.

- Grounding – passing the results as context to the model.

- Answering – formulating responses based on provided sources without an internet search.

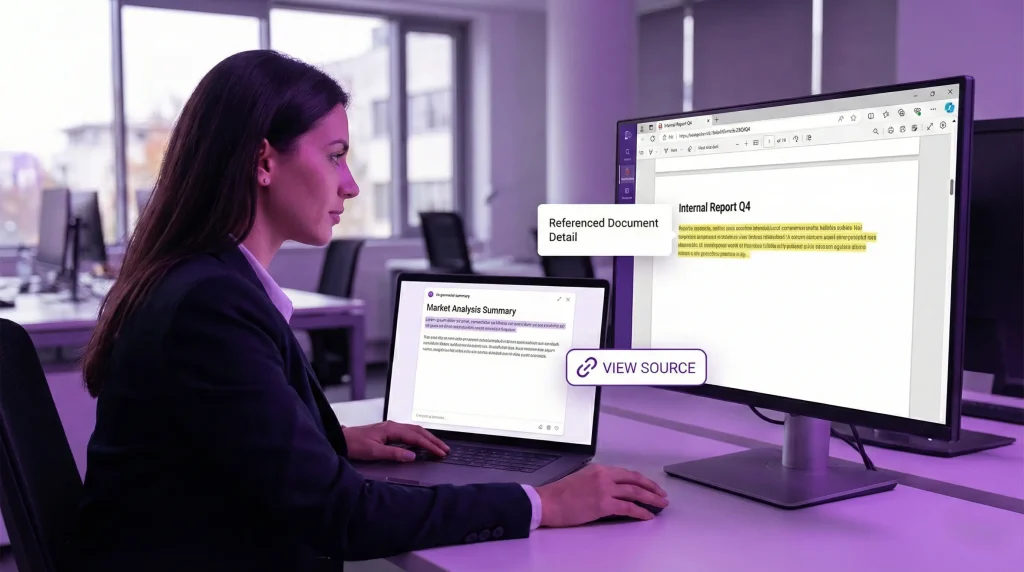

We built MIRA on this logic as a knowledge base optimized for your internal files and accessible within your company. With clickable links attached to answers, your team can jump directly to the referenced section, making facts quickly verifiable.

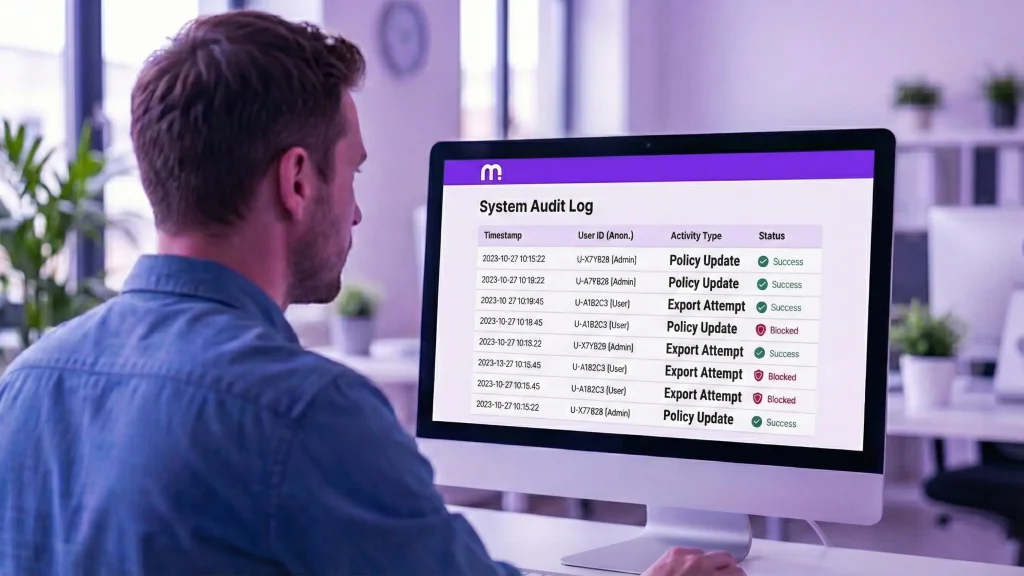

Trust with control – Permissions, logging, DLP, and data residency

Legal security is a fundamental requirement for you. Opaque systems represent a business risk, which is why MIRA supports compliance readiness for NIS2 and the AI Act. Beyond credibility, you need visibility into who has access to what within the organization.

Strategic planning is essential before deployment, as we detailed in our guide for implementing knowledge platforms. We believe security begins with a closed environment, which is why MIRA's on-premise deployment offers a controlled alternative to Shadow AI. As explained in our risk analysis, the greatest danger of public tools is the uncontrolled data flow.

What makes an internal AI controlled?

- On-premise deployment – your data does not enter a public, shared environment.

- RBAC – role-based access to specific documents.

- Audit trail and logging – every interaction remains traceable.

- DLP – data loss prevention filters help mitigate the risk to sensitive information.

- AI governance – a framework for responsible use.

- Data residency – helps ensure that data stays on servers controlled by you.

How source attribution ensures traceability

Knowledge sharing becomes true business value for you when all information remains traceable. As a leader, you need to see the connections, and for that, you need accurate data.

What is source attribution? The precise and traceable marking of the documents used to generate an answer.

MIRA leads your team directly to the relevant section of the original file with a single click. This solution helps you avoid the risk of knowledge loss while making the onboarding process for new employees easier. Consequently, the point of reference remains the source document, and uncertainty is significantly reduced. This transparency makes the answers auditable for you compared to a general chatbot.

Hallucination-free Enterprise AI – Evidence-based decision-making and ROI

Instead of confident guesswork, base your processes on evidence. MIRA supports your decisions with facts, as accuracy is the foundation of sustainable business operations. We help you mobilize your own data assets in a source-verified and controlled environment across the organization.

When the foundation of your decision-making is verifiable, it is not just a security and compliance issue, it translates directly into measurable time and cost savings. We use a straightforward ROI calculator to estimate exactly where a hallucination-free enterprise AI delivers value the fastest.

In our experience, the business impact is most significant across four key areas.

- Retaining Critical Knowledge – Reduced safety overlap and knowledge loss during key role transitions.

- Faster Onboarding – Drastically shortened learning curves for new employees.

- Freed-up Mentoring Time – Senior experts spend less time answering repetitive questions.

- Efficient Information Retrieval – Fewer wasted hours searching through internal documents and policies.

If you want to see the exact figures, we can send you the calculator, or we can run the numbers together using your company's actual data during a consultation.

[banner type="mira" text="Estimate the true value of auditable AI for your business." button="Request a Data-Driven Consultation" link="https://encomira.hu/contact"]